Agentic AI in education refers to autonomous AI systems (often called AI agents) that can understand a student’s needs, make decisions, and take action without waiting for learner input. It’s like shifting from GPS navigation to autopilot. With GPS, you follow step-by-step directions. With autopilot, the system knows the destination and drives you there on its own.

As an AI solutions development company building AI for EdTech and Education, we at 8allocate know this landscape firsthand. In this article, we unpack the top use cases of AI agents in education, architecture patterns that work in production, key risks to plan for, and a phased implementation roadmap from pilot to scale.

TL;DR: Agentic AI in Education

- Agentic AI in education refers to autonomous AI systems (AI agents) that can plan, make decisions, and take actions across multiple systems to achieve defined educational goals.

- Unlike traditional EdTech, which delivers static tools and courses, or GenAI, which generates content on request, agentic AI in education sets an objective and executes multi-step workflows to reach it.

- 40% of enterprise applications will embed task-specific AI agents by the end of 2026.

- 86% of organizations plan to increase investment in agentic AI.

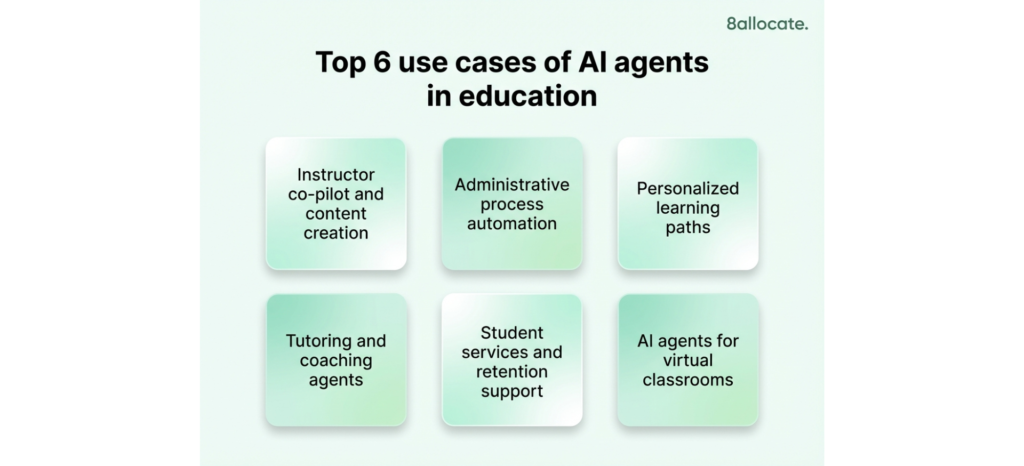

- Top use cases of AI agents in education include personalized learning paths, autonomous tutoring, student retention and alumni engagement, instructor co-pilots, administrative automation, and AI-powered virtual classrooms.

- Multi-agent orchestration is the backbone of production-grade agentic AI. By 2027, around 70% of multi-agent systems will consist of specialized agents.

- Top 2026 trends in AI agents in education include agents becoming core product infrastructure, multi-agent systems replacing single-task bots, AI fluency emerging as a hiring requirement, and personalized corporate training going agentic.

- AI agent development cost typically ranges from $50,000-$250,000+, with ongoing run costs of $4,000–$25,000+ per month depending on scale, complexity, and governance requirements.

What Is Agentic AI in Education, and Why Now?

In education, AI agents can autonomously adjust a lesson plan when students fall behind, proactively reach out to a disengaged learner, or process a stack of administrative requests overnight (without step-by-step human commands). Unlike traditional edtech tools or basic chatbots that react to commands, these AI agents adapt in real time. They personalize learning paths, identify students who may be at risk, and automate campus operations in a proactive way.

Why is agentic AI in education accelerating now? Here are the key factors:

- Generative AI breakthroughs. Large language models can now reason across context, use external tools, and chain multi-step actions – the core capabilities that make true “agency” possible. Gartner projects that 40% of enterprise applications will embed task-specific AI agents by the end of 2026.

- Market and student demand. Around 84% of college students already use AI tools in coursework, yet only 18% feel prepared to use AI professionally. That gap puts pressure on universities to integrate AI into teaching and upskill learners. EdTech companies see agentic AI as key to meeting market’s expectations. The AI in the education market reflects this urgency. It’s projected to grow from $7.05 billion in 2025 to $32.27 billion by 2030.

- Institutional initiatives and partnerships. High-profile collaborations are validating the agentic AI trend. For example, Northeastern University partnered with Anthropic to deploy Claude AI across all campuses. Duke University gave every undergraduate secure GPT-4 access under a university-managed license. At the same time, Andreessen Horowitz identified AI-native education as a Big Idea for 2026, and Microsoft is building toward “Frontier Firms” where agents are the core workforce.

How agentic AI vs GenAI vs traditional EdTech

Traditional EdTech is primarily software and learning platforms that deliver education through predefined workflows. Generative AI (GenAI) is the language and content engine. It creates explanations, practice questions, feedback the moment someone asks. Agentic AI, finally, is GenAI evolved into a goal-driven operator. It can plan steps, use tools like LMS), take actions, and verify outcomes (under clear guardrails).

| Criteria | Traditional EdTech | GenAI | Agentic AI |

| What it is | Learning platforms and tools with predefined flows | A model that generates new content or answers | A goal-driven system that uses AI and external tools to execute multi-step work, leveraging machine learning and natural language processing for autonomy |

| “Job title” analogy | Curriculum platform / LMS | Copywriter + tutor on demand | Teaching assistant + operations coordinator |

| Main output | Courses, quizzes, dashboards, reports | Explanations, lesson drafts, feedback, examples | Completed tasks: plans built, messages sent, grading done, follow-ups triggered, content updated |

| How it works | If-this-then-that rules, standard analytics, static content paths | Prompt → generates response (often with retrieval from documents) | Goal → plan → call tools → verify → iterate → escalate when needed |

| Typical user experience | “Click through modules” | “Chat with AI” | “Tell it the goal and it runs the workflow” (with checkpoints) |

| Best fit in learning | Delivery and tracking at scale | Faster content creation, better explanations, tutoring-like Q&A | End-to-end learning operations (tutoring, grading, progress monitoring) |

| Tool integration and actionability | Integrated systems but rule-based | Mostly “talks/writes” unless connected | Uses tools (LMS, calendar, email, CRM) to take actions |

| Governance and risk | Mature (permissions, roles, logs) | Requires safety filters with data controls | Requires strong action controls (e.g., approval gates, sandboxing, monitoring, rollbacks) |

Top Use Cases of AI Agents in Education

Agentic AI isn’t a single tool. It’s an approach that enhances learning, teaching, and operations simultaneously. Below are the top use cases of agentic AI in education, each showing how agents deliver value.

Personalized and adaptive learning paths

AI agents make true 1:1 learning possible at scale. These agents act as always-on recommender systems that tailor curriculum to each student’s needs in real time. Key capabilities include:

- Diagnostic assessments. An AI agent administers a short pre-quiz and directs the student to the right starting point based on skill gaps.

- Adaptive content recommendations. As the student progresses, the agent suggests specific readings, videos, or exercises aligned to performance and learning style. AI agents tailor educational content to each student’s learning style, creating personalized learning experiences.

- Real–time intervention. The agent predicts when a learner is about to get stuck and offers help proactively. These systems provide instant feedback and can provide instant feedback to support student progress.

- Conversational coaching. Advanced agents engage students in dialogue, ask guiding questions, and give feedback on open-ended responses. For instance, Khanmigo, powered by Microsoft, already serves 400,000+ educators across 50+ countries using this model.

Autonomous tutoring and coaching agents

These agents go beyond static content delivery by providing real-time, personalized support. Intelligent tutoring systems act as digital tutors, adapting to individual learners’ needs (examples include platforms like Duolingo). AI assistants automate administrative tasks like grading, assessments and providing feedback. Virtual assistants also allow students to receive help outside of regular school hours.

These agents adapt explanations based on each learner’s history and preferences, such as switching to visual diagrams if that approach works best. They also retain context over time, remembering past struggles and effective strategies to provide more relevant support. Designed with guardrails, they encourage critical thinking rather than giving direct answers.

Student services and retention support

AI agents in education for student retention turn reactive support into predictive intervention. Universities are deploying agents across the full student journey: admissions, advising, career services, and alumni engagement. Key use cases include:

- Early alert systems. Agents monitor LMS activity, grades, student engagement, attendance to spot drop-off patterns in real time. If a student hasn’t logged in for a week, the agent can automatically send a check-in: “I noticed you missed some classes, everything okay? Here are resources that might help.” This proactive outreach prompts re-engagement before it’s too late.

- Personalized nudges. AI agents send personalized messages, such as motivating deadline reminders or congratulatory notes after a good score, along with suggestions to book a tutor for upcoming challenging units.

- Virtual assistants for student inquiries. AI agents answer common questions 24/7, such as “How do I change my major?” or “What’s the drop deadline?” They escalate to a human educator only when necessary.

- Admissions and recruiting. One study found that AI recruiting agents were better received by prospective students than human recruiters in early admissions stages. By answering FAQs, guiding applicants, and following up consistently, these agents increase enrollment yield without adding headcount.

- Alumni engagement. Agents are also extending beyond current students. They help universities maintain long-term relationships with alumni through personalized outreach, event recommendations, and career networking nudges at scale.

Instructor co-pilot and content creation

AI agents cut instructor preparation and grading time by up to 30%. These agents act as intelligent teacher’s aides, operating under the educator’s direction. Key applications include:

- Automated content generation. The agent drafts lesson outlines, lesson plans, slide decks, and quiz questions at varying difficulty levels. Faculty review and refine, but the AI provides a valuable head start.

- Assessment support. Agents can evaluate structured responses, flag issues, and provide feedback before final submission. For example, Grammarly’s AI Grader agent evaluates draft essays against a provided rubric. At Florida State University, faculty use Microsoft Copilot Studio to build custom grading and feedback agents without writing code.

- Administrative assistance. Agents compile engagement analytics, answer common student emails, summarize which topics confused the class most, and more. By automating routine tasks, AI agents enable educators to focus more on creative tasks such as personalized instruction and curriculum development. AI agents can save teachers 8-10 hours per week by automating these routine tasks.

- Content updating. Agents scan course material to flag outdated examples (e.g., a statistics course using 2010 data) and suggest current references, ensuring curriculum stays fresh and aligned to standards.

Europe’s Open Institute of Technology (OPIT) deployed a faculty support agent for instructional materials and self-assessment tools, cutting grading and correction time by 30%. Rather than replacing professors, agentic AI in this context augments them tutors.

Administrative process automation

AI agents handle the operational busywork that bogs down university staff, faster, more accurately, and around the clock.

- Scheduling and logistics. Agents coordinate room availability, instructor preferences, and student course combinations to propose optimal schedules and autonomously resolve conflicts when double-bookings occur.

- Data entry and processing. These are examples of administrative tasks and routine tasks that AI agents automate. When a student submits a change-of-major request, an agent can validate it, update the Student Information System (SIS), notify departments, and initiate approval workflows

- Anomaly detection. In finance or IT, agents monitor for spikes in help desk tickets or enrollment data inconsistencies, then trigger resolution protocol. Then it alerts the right team with diagnostic data or correcting minor errors on their own.

- Multistep workflow orchestration. Agents can coordinate complex processes across systems. For example, in financial aid verification, they can automatically cross-check data from multiple platforms overnight. These agents connect tasks in sequence, apply conditional logic, and handle exceptions through reasoning not fixed rules.

Here’s a company case in this regard: Broward County Public Schools deployed agents via Microsoft Copilot Studio for contract management and SIS support, enabling non-technical staff to automate complex admin workflows.

Explore how AI agents in data analytics can improve and speed business intelligence in our article “AI Agents for Data Analysis in 2026: What They Are and How They Change BI.”

AI agents for virtual classrooms

These agents are like a co-pilot for teachers in online/hybrid classes.

During the lesson, they watch simple engagement signals and can nudge the teacher with ideas like “run a quick poll” or “do a short breakout” or suggest interactive exercises to boost student engagement. These interactive exercises, powered by AI, can adapt in real time and provide instant feedback to students, helping personalize learning and immediately assess performance. After the lesson, these agents handle the admin work, such as transcripts, summaries, notes, and study guides.

For example, Zoom’s AI Companion, now embedded across Zoom Workplace for Education, provides live lecture summaries, automated meeting notes, custom study guides, transcriptions, and language translations.

Architecture Patterns for Implementing AI Agents

Deploying agentic AI in education is not as simple as turning on a chatbot. These autonomous systems require thoughtful architecture to perform reliably, integrate with existing tools, and remain under appropriate control. Below are the key patterns and considerations for getting this right.

Multi-agent orchestration with an “autonomy layer”

The shift is already visible in the market. Gartner reports a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025, with 70% of multi-agent systems expected to consist of specialized agents by 2027.

This architectural pattern means designing a team of specialized AI agents rather than one monolithic AI. A lead orchestrator agent breaks complex tasks into subtasks and assigns them to agents optimized for specific roles. For example, an AI Teaching Assistant handling “help this student improve in calculus” could activate a tutor agent for problem-solving and a motivation agent for engagement, working in parallel and delivering one unified response.

Benefits of multi-agent architecture for education and EdTech:

- Specialization. Each agent is optimized for a clear role, improving quality and reliability.

- Parallel work. Multiple agents handle different subtasks simultaneously.

- Modularity. New agentic capabilities can be added without rebuilding the entire system.

- Easier scaling and control. Clear role boundaries make monitoring, governance, and performance optimization simpler.

Guardrails: policies, constraints, and human oversight

Around 86% of organizations plan to increase investment in Agentic AI. Yet, only 6% trust it (HBR Analytic Services, 2025). How do we close the gap? Build safeguards at 5 levels of AI agents autonomy, one level at a time.

Here are the guardrail layers to secure agentic AI in education:

- Instruction-level policies. Each agent gets a clear scope and constraints.

- Content filtering. Agents use moderation models to catch harassment, hate speech, or cheating facilitation.

- Tool and data access control. Every agent that touches student PII needs role-based access, audit trails, and data minimization by design. As an EdTech provider, you must comply with FERPA/GDPR for AI in education, and the EU AI Act.

- Human-in-the-loop. Tutors, teachers, supervise critical junctures.

- Fail-safes. If safety fails, the agent must be rolled back to “recommend only” mode (disable tool execution). If cost fails, it must be switched to a cheaper model.

A survey of education leaders confirmed that clear ethical guidelines and the ability to oversee AI decisions are top factors for institutional trust. At Fortune 500 companies, AI governance has become a board-level priority as organizations scale deployment. At 8allocate, we implement allow-listed tools, role-scoped permissions, and human-in-the-loop checkpoints to manage edge cases and build trust in the agents.

An agentic AI system does not know things the way software with fixed rules does. Don’t give agents more power until it shows it can handle the current level responsibly.

Oleg Popov, AI solution architect at 8allocate

Telemetry and monitoring for AI agents

Telemetry for AI agents means monitoring a detailed audit trail of the AI’s decisions, tool usage, and actions.This is non-negotiable in education for following reason:

- Accountability and compliance. If a student challenges an AI-generated grade, you need logs showing what the agent considered and why. The EU AI Act may require such traceability for high-risk educational AI systems.

- Bias and error detection. By monitoring how the agent responds, you can spot patterns of mistakes or unfair behavior, and fix it.

- Performance metrics. Track response times, task completion rates without human intervention, and deferral frequency.

- Continuous improvement. Transcripts from cases where agents escalated to humans become fine-tuning data. A/B testing of agent behaviors lets you iteratively refine prompts and tool strategies.

Agent frameworks are maturing rapidly. For example, Microsoft’s AutoGen framework offers OpenTelemetry integration for tracing agent workflows. LangChain and LangGraph provide observability layers for chaining and routing between agents with full trace logging. CrewAI adds role-based agent coordination with built-in monitoring.

Learn best practices for structuring an AI product team in our article “How to Build and Structure an AI Development Team in 2026.”

Hallucinations, cost at scale

Misalignment and unpredictable cost spikes remain a concern.

- AI hallucinations. Don’t let the AI guess. Make it answer only from approved materials and clearly show the source of the information.

- Cost unpredictability. Plan costs in advance and set monthly spending limits per student so growth doesn’t create surprise bills. For example, model expenses for 10K, 25K, and 50K users before scaling.

Implementation Roadmap of Agentic AI in Education

While AI agents offer significant benefits in efficiency and personalization, their implementation requires careful consideration of data privacy, algorithmic bias, and ensuring they complement rather than replace human instruction.

Below is a three-stage roadmap to integrate agentic capabilities into your EdTech product or institutional ecosystem.

Step 1. Launch a focused AI agent pilot

Early idea validation is essential. According to Forrester, 25% of planned AI spending may be deferred by 2027 due to ROI concerns.

- Pick 1-2 use cases with clear pain points and available data. For example, deploy an AI tutoring agent in an introductory programming course with historically high dropout rates. Pilots should focus on the early stages of the learning process to identify and address student needs early.

- Define success metrics upfront and capture qualitative feedback alongside quantitative data.

- Provide the agent access only to the data it needs, such as LMS quiz results, syllabus content, or student records. APIs or, in early pilots, secure CSV exports are also sufficient at this stage.

- Build a minimal viable agent using rapid deployment frameworks such as AutoGen, LangChain, or secure SaaS platforms.

- Limit agent to one course, one department, or controlled user group.Collect usage data, monitor behavior, iterate mid-pilot if needed.

Finally, document outcomes, issues, and mitigation steps. Trust enables product expansion.

Explore how 8allocate’s AI MVP Development Services can help you validate the agent’s idea within 4-6 weeks.

Step 2. Expand the agent pilot

With pilot results in hand, you scale agentic features to more users, more courses, more use cases.

- Secure leadership buy-in. Present pilot outcomes to leadership and align expansion with strategic priorities.

- Form a cross-functional task force, including IT, student services, student voices. This group refines policies, addresses concerns, and acts as institutional champions.

- Invest in change management. Provide workshops, documentation, and support channels.

- Expand AI agent gradually, from one course to a department, from one agent to multiple agents. Keep humans in the loop.

- Harden infrastructure as you move from prototype to production: full LMS integration with SSO, automated data pipelines, proper data management, scalable cloud infrastructure. Leverage data-driven insights from student performance and institutional data to inform expansion decisions and support educational quality.

- Communicate wins. Share real student and faculty outcomes. Evidence replaces skepticism and builds trust in the product.

See the patterns for implementing agentic AI across the industries, including Edtech and Education, in our guide “TOP 50 Agentic AI Implementations: Strategic Patterns for Real-World Impact.”

Step 3. Scale the product

Agentic AI is fully integrated into your EdTech product and available for all customers.

- Enterprise deployment. Every student and instructor can access AI-powered support through centralized platforms.

- Scalability and cost optimization. The system must handle peak usage periods, such as finals, without degradation. Optimize costs through model routing, usage caps and cost forecasting across user growth scenarios.

- Automated monitoring and QA. Dashboards and alerts for accuracy drift, usage spikes, and anomalies. Periodically sample interactions for human quality review. Retrain or update models at least yearly.

- Institutionalize support and governance. Integrate AI literacy into faculty onboarding and student orientation. Update policies, and maintain compliance with FERPA, GDPR, and the EU AI Act.

Finally, measure the long term impact of agentic AI in education. Did retention improve year-over-year in courses using AI tutors? Are graduation rates or job placement affected? Calculate AI ROI: compare costs of running AI with efficiency gains and educational outcomes across multiple years of data.

Agentic AI Trends in Education for 2026 and Beyond

Here are 4 agentic AI trends in education for 2026. If you build an EdTech product, lead corporate L&D, or run an online school, these are the shifts you need to act on.

*This analysis pulls from market signals across reports by Gartner, Microsoft, Google, McKinsey, and more.

AI agents become product infrastructure

AI agents are shifting to core product architecture. In education, this is already happening: Microsoft is embedding Copilot directly into LMS environments starting Spring 2026. Increasingly, AI agents are being embedded into learning platforms to deliver adaptive educational content as part of core product infrastructure.

Multiagent systems (MAS)

Single-task bots are being replaced by AI agents that handle multi-step workflows across tasks and systems. Google’s AI Agent Trends 2026 report points in this direction, and Gartner also names multi-agent systems as a major technology trend for 2026.

As Anushree Verma, Sr Director Analyst at Gartner, explains:

“AI agents will evolve rapidly, progressing from task- and application-specific agents to agentic ecosystems.”

Anushree Verma, Sr Director Analyst at Gartner

AI fluency and orchestration skills

Organizations face a dual mandate: upskill people to work with AI agents while preserving the reasoning skills GenAI is eroding. Gartner predicts, 50% of organizations will introduce AI-free assessments through 2026. McKinsey has already deployed 20,000 internal agents and evaluates candidates on AI collaboration skills. By 2027, 75% of hiring processes will include AI proficiency testing (Gartner).

Personalized corporate training

AI agents are replacing static training with systems that adapt pathways, predict learner risk, and intervene before failure. These technologies are preparing the next generation for emerging job market demands by analyzing labor trends and creating targeted training programs. Microsoft targets skilling 500,000 in AI by 2026. Forrester predicts 30% of enterprises mandating AI training. McKinsey predicts nearly every occupation will experience a skill shift by 2030.

How 8allocate Can Help You Add Agentic AI in Education

8allocate has spent over a decade helping EdTech teams deliver GenAI, ML, and agentic capabilities into products and internal operations. Within our AI agent development service, we build production-grade agent stacks end to end: multi-step workflows, tool integrations, knowledge/RAG, safety guardrails, and help businesses roll out AI agents securely.

We’ve supported over 100 companies worldwide, including enterprise players like GoIT, in developing AI tutor assistants and other AI-powered solutions, integrated within comprehensive learning platforms.

So whether you’re starting from scratch or drowning in disconnected AI tools, we can help you build robust agentic systems for education and EdTech, without sacrificing flexibility. Contact us to learn about our services and how we can help.

Still Got Questions on AI Agents in Education?

Quick Guide to Common Questions

What are the types of AI agents in education?

Here are the top examples of AI agents in education:

- AI tutor / study coach

- Student support agent

- Advisor / success agent

- Teacher/copilot agent

- Assessment/proctoring helper

- Operations/admin agent

- Content agent

- Educational AI agents

- AI assistants

What are the benefits of AI agents in education?

The key benefits of using AI agents in education are instant feedback, personalized learning experiences that adapt to each student’s needs, faster support and tutoring 24/7, more personalized learning paths and practice, and better student experience.

What if the AI agent gets it wrong with a student?

To reduce the risk of an AI agent being wrong with a student, treat the agent as assistive, not authoritative, and design the experience so learners can verify what they receive. For academic or policy answers, require sources/citations (or retrieved snippets). For high-stakes scenarios, route to human handoff by default. Add a clear “report an issue” loop and fix recurring failures fast. Ethical considerations, such as data privacy, algorithmic bias, transparency, and academic integrity, are essential when adopting AI agents in education. Human oversight and the development of ethical frameworks help ensure responsible AI use and address errors effectively. In practice, 8allocate, an AI solutions development company, implements allow-listed tools, role-scoped permissions, and human-in-the-loop checkpoints to handle edge cases and build trust.

What about data privacy? Can AI agents access sensitive student information?

Yes, an agent can access sensitive information only if you explicitly grant it, and it should be governed by the same (or stricter) rules as any system touching student records. You control exposure through role-based access, scoped APIs, and least-privilege design. This is required so advising agents may read a degree audit, but never touch medical or unrelated records. You should minimize data access, log every sensitive call, and ensure institutional control over vendors and processing under FERPA/GDPR and internal policies, with compliance and legal reviewing the data flows before rollout.

How do we handle mistakes or bias in AI agent responses?

You handle mistakes by assuming they will happen and engineering for containment: set expectations that AI can err, and make sure important decisions aren’t made by AI alone. Then back it with discipline. It includes evaluation test sets, regression checks per release, and monitoring for repeated failure patterns. For bias, you need periodic audits across cohorts, clear guardrail policies, and a feedback pipeline that lets you correct prompt and tool behavior quickly when issues are reported. For instance, 8allocate, an AI solutions development company, manages AI bias by designing structured evaluation processes, creating test cases aligned with institutional policies, adding automated bias checks before release, and applying guardrails with human review for sensitive outputs.

What metrics should we look at to evaluate success of AI agents?

To evaluate the success of AI agents, focus on three core metric groups:

- Business or learning improvements (e.g., completion, retention, satisfaction, faster support resolution, achievement of defined learning objectives).

- Reliability and model trust signals (e.g., verified accuracy, escalation rate, citation correctness)

- Operational efficiency (e.g., latency, cost per session, tool-call success)

If metrics show high usage but high escalations, the system is not delivering value. The real success indicator is reliable resolution with measurable business impact.

How do we integrate AI agents with our existing educational systems (LMS, SIS, etc.)?

To integrate AI agents with existing systems like an LMS or SIS, the agent should connect through approved APIs (such as LTI for LMS integrations and secure SIS endpoints for student records). Integrating AI agents with a comprehensive learning platform enables more efficient resource allocation by optimizing educational resources through intelligent automation. An integration layer should handle authentication, permissions, and activity logging. This approach avoids duplicating sensitive data, enables centralized access control, and allows the agent to retrieve only the information it needs in context.

What is AI agent development cost?

The cost of AI agent development for education typically ranges from $50,000 to $250,000+, depending on scope, number of integrations (LMS/SIS/SSO), and governance requirements. The costs usually come in two parts:

- Initial AI build/integration: $50,000-$80,000 for a focused pilot; $80,000-$250,000+ for enterprise, multi-agent systems. This includes agent workflow design, tool/API integrations, knowledge/RAG setup (if needed), guardrails, evaluation/testing, and governance-ready rollout.

- Ongoing run costs: typically $4,000-$25,000+ per month, covering model usage, hosting/infrastructure, monitoring/observability, and maintenance/iteration (with costs trending higher as usage and SLA expectations scale).

For example, 8allocate, an AI solutions development company runs a 1-2 week discovery phase to clarify requirements, define the MVP scope and data boundaries, and provide an accurate estimate for the AI agent MVP.

What are the biggest risks of using AI agents in education?

The biggest risk of using AI agents in education is giving incorrect guidance that harms a student’s learning, progress, or decisions. Close behind are privacy leakage and overexposure of student data, bias or unequal treatment across cohorts, security threats like prompt injection that can trigger data exfiltration via tools, and costs/latency spikes when scaled. These risks are manageable, but only if you treat agents like production systems with controls, monitoring, and clear human accountability.

How can we get started with an AI agent pilot?

So, to get started with an AI agent pilot ensure successful AI adoption in education, pick one high-impact use case where data access and boundaries are clear, and define success metrics upfront. Then partner with a reliable AI solutions development company like 8allocate to build an AI agent MVP in 4-6 weeks and validate the idea in production conditions. If you’re not sure what use case to prioritize, whether the right data exists, or how it should integrate with your LMS/SIS, start with AI consulting services to shape the pilot scope.